Meet MERV – Electronics Today (Australia) April 1971

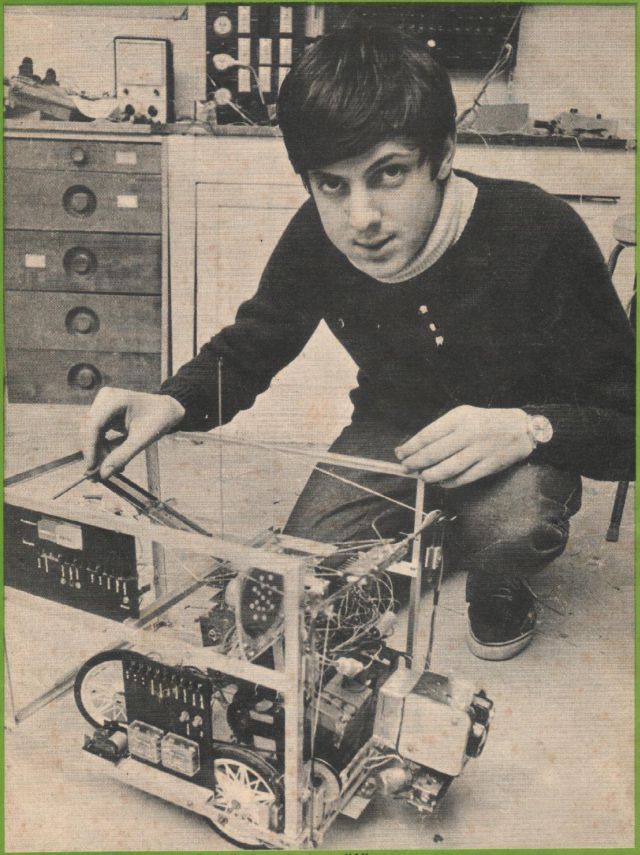

MERV stands for Mobile Environmental Response Vehicle. Its creator, Peter Vogel, built it to demonstrate his theory on artificial intelligence

IS it true that machines are incapable of intelligence, or can they in fact be endowed with reasoning power?'

One answer — in the form of an 82-page thesis — won the 1970 School Science Research prize for Cranbrook's 15-year-old Peter Vogel, then a fourth-form student at Cranbrook School, Sydney.

The thesis — 'An Investigation into Artificial Intelligence' — outlines Peter's reasoning and subsequent conclusions. It also describes in detail the design and construction of his 'mobile environmental response vehicle' (MERV) which, he suggests, qualifies as an 'intelligent machine'. His thesis is predicated on definitions of 'instinct' and 'intelligence' which —characteristically — he formulated himself.

INSTINCT he defines as 'basic knowledge and motives possessed at birth, which is built on by the process of intelligence; instinct includes the method of carrying out intelligence.' INTELLIGENCE, Peter says, 'is the process of drawing logical conclusions by correlating a particular experience with memory of previous experience and instinct.'

He qualifies these definitions by the

admission that they are probably much too simplistic, but feels they are adequate for his purpose.

There are, he states, four basic requirements for an intelligent machine.

INPUT — a system for receiving data for processing.

MEMORY — some system for storing information and which can be altered readily.

LOGIC SYSTEM — capable of carrying out logical processes of intelligent reasoning.

OUTPUT — providing an external indication of the machine's intelligent activities.

MERV uses all four criteria for his life-purpose, which is 'self-preservation'. He roams about until he encounters an unknown object. He performs various tests on these objects and turns and moves away if the object is 'hostile'.

He incorporates an in-built self-preserving 'instinct' plus the ability to acquire and store environmental knowledge which is used to 'preserve its life'. This ability Peter refers to as 'intelligence'.

Having sensed the presence of an object, Merv goes through a predetermined sequence:

1. Stops at a 'safe' distance.

2. Tests to see what the object is.

3. Searches for memory of previously inflicted 'pain'.

4. Takes evasive action if deemed necessary, following intelligent

reasoning.

A large number of sensors are required to effectively determine whether an object is 'safe' or 'hostile'. Peter describes a number of sensors, including:

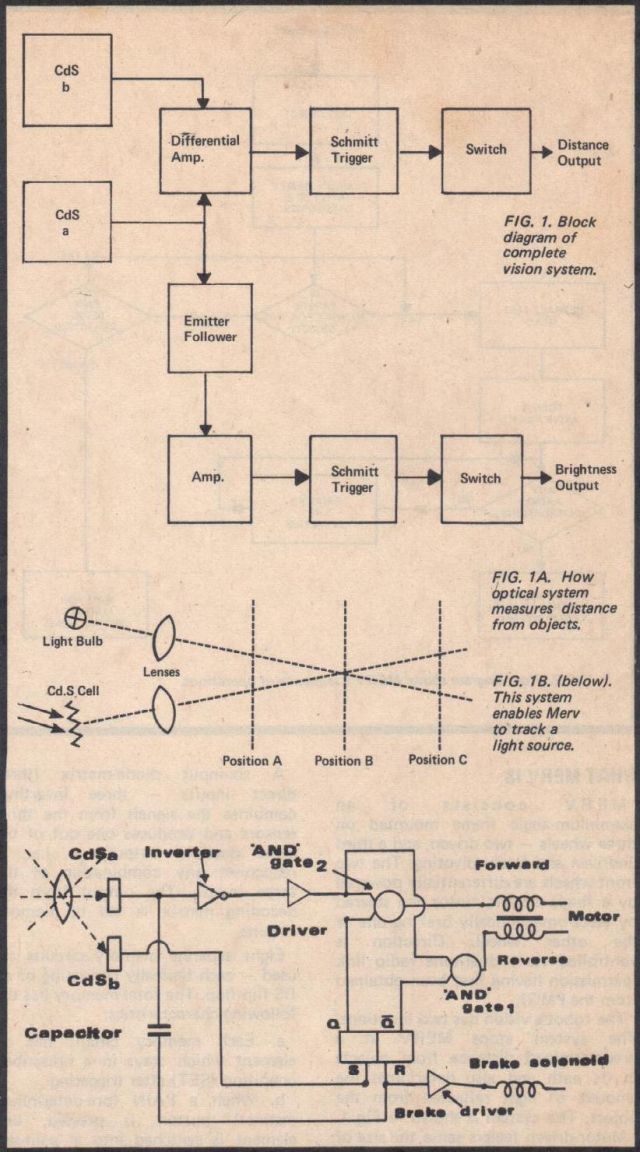

a. An optical sensor which locates objects and stops Mery 1 0"-1 2" away.

b. A brightness detector.

c. Tone discriminators to determine whether objects are emitting 'hostile' sounds.

d. Heat sensors.

e. Sensors for determining hardness of objects.

f. Sensors for determining if objects are metallic or non-metallic.

g. Devices for determining size, smell, and taste.

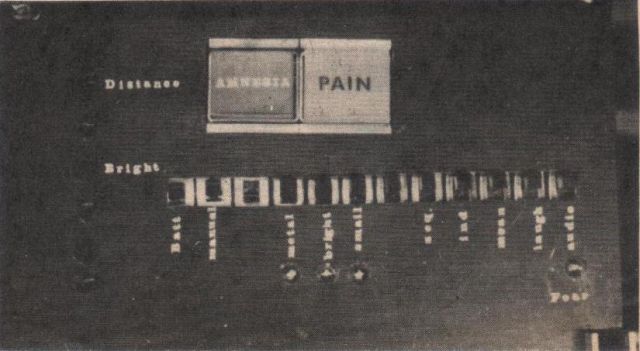

Limited finances precluded the use of a large number of sensors — the builder realising that each additional sensor implied a doubling of the memory's bit capacity. It was necessary to compromise. The final choice was to test for brightness, test whether or not an object was metallic, and check on physical size.

human instinct — it is present at birth and does not change without external interference such as brain surgery".

WHAT MERV DOES

The robot's predetermined behavioural pattern is shown diagramatically in Fig. 2 ( a three-stage binary counter ensures that the correct sequence is followed). Starting at the top, MERV works his way down, carrying out the operations represented by the squares and making the decisions represented by the diamonds. All decisions are of a Yes/No nature and are made by standard logic gates.

MERV responds to 'hostile' objects by turning and moving away — the results of his actions are also expressed audibly. As he is controlled by the fear of pain and the pursuit of pleasure (pleasure being the absence of pain!), MERV's audible response is either a moan or a laugh. Both are generated electronically. The moan is a descending tone produced by a unijunction/transistor combination. The laugh response is produced by a similar circuit and consists of a descending tone modulated at 10Hz. Individual steady tones are also used. These report the result of MERV's testing for size, composition and brightness.

Manual programming allows a 'pain' signal to be placed in a specific

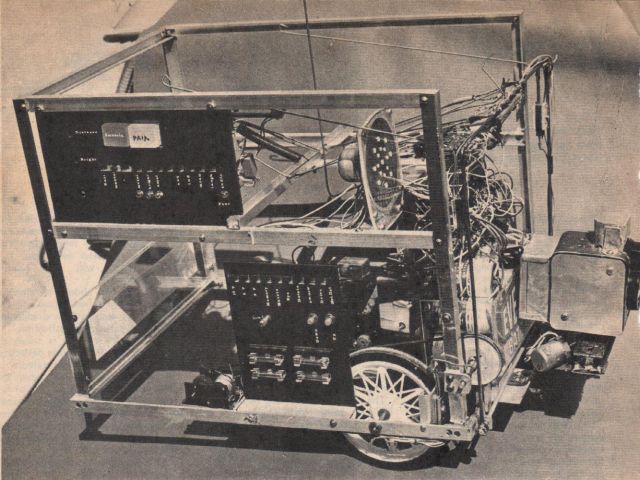

WHAT MERV IS

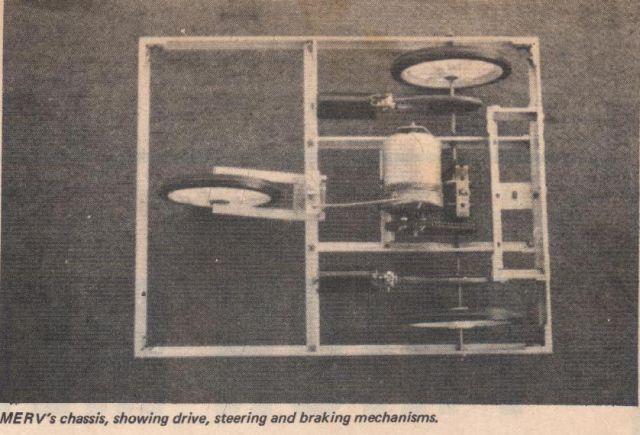

MERV consists of an aluminium-angle frame mounted on three wheels — two driven, and a third undriven and freely pivoting. The two front wheels are differentially powered by a single electric motor and steered by electromagnetically braking one or the other wheel. Direction is controlled via a dual-tone radio link (permission having first been obtained from the PMG).

The robot's vision has two functions. The system stops MERV at a predetermined distance from objects in its path and also determines the amount of light reflected from the object. The system is shown in Fig 1. Motor-driven feelers sense the size of objects — these feelers fold in the middle, allowing MERV to turn and retreat if a response is 'unfavourable'. Two feelers are used, each free to move independently. Series connected switches sense the vertical obstruction of either feeler.

The inductance of a coil is changed if metal is brought into the immediate vicinity, and this principle is exploited in the 'metal' detecting circuit. Ceramic resonators were used in the final design.

A six-input diode-matrix (three direct inputs — three inverting) combines the signals from the three sensors and produces one out of the eight possible outputs — i.e., it recognises any combination of the three inputs. The output from the decoding matrix is fed to memory circuits.

Eight separate memory circuits are used — each basically consisting of an RS flip-flop. The total memory has the following characteristics:

a. Each memory circuit has an element which stays in a prescribed condition (SET) after triggering.,

h. When a PAIN (pre-determined instinct) button is pressed, one element is switched into a 'pain-set' condition. The seven remaining elements remain in the condition they were in before the 'pain' button was pressed.

c. All memory elements can be cleared by pressing an 'amnesia' button.

MERV's instinct consists of circuitry which ensures that his approach to a problem-situation follows a predetermined pattern. Nothing short of a soldering iron can change it. 'This', says Peter, 'is analogous to memory location before MERV tests an object. It is thus possible to determine which objects MERV fears before actual testing.

MERV can be directed to an object via the radio link or allowed to wander around until an object is encountered. The memory circuits may be programmed to experience 'pain' when certain objects are evaluated, or — if power is momentarily disconnected —'pain' signals may be locked into the memory in a quasi-random fashion. In one experiment 'pain' was externally inflicted when MERV examined the following objects:

a. Black wooden box.

b. Brick.

c. Small white metal garbage can.

d. Large black metal box.

e. Upright broom.

f. Black toy car.

g. White four gallon drum.

h. Half-gallon plastic detergent

bottle.

After programming to fear these objects, MERV reported on the following:

a. Chair — reported as a black wooden box.

b. Light-coloured book on end —reported as upright broom.

c. Family cat — no decision as cat was singularly uncooperative!

d. Electric iron — reported as garbage can.

e. Gallon bottle of sulphuric — as detergent bottle.

f. Hammer — as toy car.

Are these intelligent results?

'At first sight', says Peter, 'the answer must be no. But', he maintains, 'although the decisions are inaccurate, they are intelligent.

'Consider what a human would do under similar circumstances. Suppose he has never seen a bottle before. Give him a bottle of detergent and tell him what it is. He notes its important characteristics and stores them in his memory. The data will probably include:

Detergent bottle

Light colour.

Fairly small.

Narrower at top than bottom. Room temperature.

Not heavy.

Removable top.

Liquid inside.'

'Now give this person a bottle of sulphuric acid to evaluate. He notices qualities identical to the detergent bottle and, using his intelligence, draws the conclusion that this also is a bottle of detergent. If he remembered how the detergent smells, he would conclude otherwise — but within the capacity of his senses he has made an intelligent albeit a wrong decision". Peter insists that, although MERV has limited discriminating ability, he does draw intelligent conclusions. 'He draws conclusions about what an object is by instinct — he is wired to do this — then he correlates this conclusion with previous experience by referring to his memory, to decide whether he is likely to receive pain. The conclusion may not always be a correct one, but it is the logical one consistent with the capacity of his senses and memory.

'It can be argued that MERV's operation is not similar to a human's process of intelligence in that MERV is wired to follow a fixed pattern, whereas a human "wires himself'. But is this the case? Is it not true that humans are also wired to follow a certain pattern over which the individual has no control?'

Peter's argument is that humans, like MERV, are built according to a strict plan, which has provision for a section specifically designed to he used for memory and logic and which together make intelligence possible. The only important difference between MERV and the human, intelligence-wise, is the ratio between intelligence and instinct. 'MERV is governed by instinct much more than a human is because a human's intelligence is much more comprehensive than MERV's,' says Peter.

'The linking factors on MERV's intelligence are the amount of data available for him to work with and the limited capacity of his memory and logic circuitry. However, I see no reason why a machine as intelligent as a human could not be made, providing it has sufficient memory and its electronics are able to handle the amount of data stored in it.'

BEHAVIOURAL SCIENTIST JAN VERNON COMMENTS ON MERV'S 'INTELLIGENCE'

Is it possible for computing machines to think?

No — if one defines thinking as an activity peculiarly and exclusively human. Any such behaviour in machines therefore would have to be called 'thinking-like' behaviour.

No — if one postulates that there is something in the essence of thinking which is inscrutable, mysterious or mystical.

These negative answers are regarded by many behavioural scientists as 'unscientific'. Edward Fergenbaum and Julian Feldman, of Berkeley, say the question is to be answered by experiment an d observation, comparing the behaviour of the machine with that behaviour of human beings to which the term is generally applied. Negative answers they regard as 'unscientifically dogmatic'. Their goal of artificial intelligence research is 'to construct computer programmes which exhibit behaviour that we call "intelligent behaviour" when we observe it in human beings.'

Research is all-important — Paul Armer (head of RAND Corporation's Computer Science Dept.) points out that there exists a continuum of intelligent behaviour, and the question of how far we can push machines out along that continuum is to be answered by research, not dogma.

Armer goes on to say that 'there is a strong personal factor in the attitude of many negativists: to concede that machines can exhibit intelligence is to admit that man has a rival in an area previously held to be within the sole province of man.'

It must always be remembered, in any meaningful comparison of human/machine intelligence, that very little is known about human intelligence. At the moment our knowledge of learning mechanisms for problem-solving is rudimentary. Marvin Minsky states: 'You regard an action as intelligent until you understand it. In explaining you explain away.'

The Australian Peter Vogel later went on and built the Fairlight music synthesizer as used by Stevie Wonder (see here).